Tensor-Train Decomposition

日本語版: Tensor-Train Decomposition (JP)

Tensor Decomposition Technology for Massive Data

Fast Data Compression via Parallel Tensor-Train Decomposition (TTD)

Modern scientific computing, AI, and physical simulation increasingly rely on massive data represented as multidimensional arrays, or tensors. However, as the number of dimensions grows, the data size increases exponentially, leading to the well-known “curse of dimensionality.” This makes storage and analysis difficult if the data are handled in their original form. A powerful approach to this problem is Tensor Train Decomposition (TTD), which represents a high-dimensional tensor as a sequence of smaller tensors. By doing so, TTD dramatically reduces the number of parameters required to represent the data and enables efficient storage and computation.

Conventional TTD algorithms such as TT-SVD are based on sequential processing, which makes both computation time and memory consumption very large for massive tensors. In particular, these methods do not take full advantage of large-scale parallel environments such as supercomputers.

To address this issue, we proposed PTTD (Parallel Tensor Train Decomposition), a parallel tensor decomposition algorithm. In PTTD, a massive tensor is divided into multiple subtensors, each subtensor is decomposed independently, and the results are then integrated to construct the global TT representation. During integration, TT-rounding is used to remove unnecessary ranks and achieve efficient compression. In addition, we introduced a NOQR-rounding scheme that omits QR decomposition in order to further reduce the computational cost.

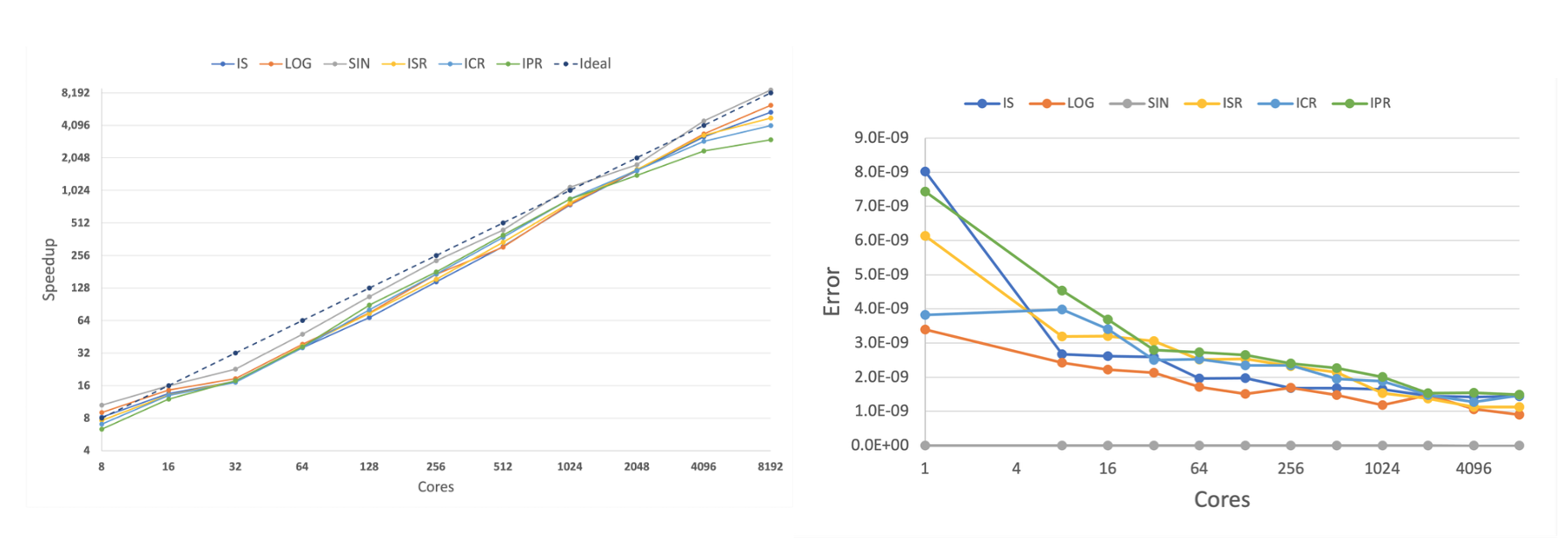

Experiments on a supercomputer demonstrated extremely high parallel performance. Using up to 8192 cores, the proposed method achieved more than 3000x to 8700x speedup compared with conventional methods, showing that even very large tensors can be decomposed efficiently. The approximation error remained within the theoretically guaranteed range, while maintaining a very high compression ratio of more than 99.99%.

This work provides a core technology for massive-scale data analysis in fields such as AI, machine learning, and scientific simulation. Future work will extend the method to even larger data analysis problems through more efficient algorithms and automated data distribution strategies.

Key Publications

- Shiyao Xie, Akinori Miura, and Kenji Ono, Error-bounded Scalable Parallel Tensor Train Decomposition, 2023 IEEE International Parallel and Distributed Processing Symposium Workshops (IPDPSW), 2023.